Need to let loose a primal scream without collecting footnotes first? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid: Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned soo many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this.)

Would you believe this prescient vibe coding manual came out in 2015! https://mowillemsworkshop.com/catalog/books/item/i-really-like-slop

In other news, the Open Source Intiative’s publicly bristled against the EU’s attempt to regulate AI, to the point of weakening said attempts.

Tante, unsurprisingly, is not particularly impressed:

Thank you OSI. To protect the purity of your license – which I do not consider to be open source – you are working towards making it harder for regulators to enforce certain standards within the usage of so-called “AI” systems. Quick question: Who are you actually working for? (I know, it is corporations)

The whole Open Source/Free Software movement has run its course and has been very successful for business. But it feels like somewhere along the line we as normal human beings have been left behind.

You want my opinion, this is a major own-goal for the FOSS movement - sure, the OSI may have been technically correct where the EU’s demands conflicted with the Open Source Definition, but neutering EU regs like this means any harms caused by open-source AI will be done in FOSS’s name.

Considering FOSS’s complete failure to fight corporate encirclement of their shit, this isn’t particularly surprising.

In other news, Elon Musk’s personal chatbot has proudly proclaimed its available on Telegram, and its proclamation got picked up by The Verge:

Right now, the integration is limited to “Grok’s available as an optional chatbot”, but going by what I’ve seen on BlueSky, people are already taking this as their cue to jump ship to Signal.

Stumbled across some AI criti-hype in the wild on BlueSky:

The piece itself is a textbook case of AI anthropomorphisation, presenting it as learning to hide its “deceptions” when its actually learning to avoid tokens that paint it as deceptive.

On an unrelated note, I also found someone openly calling gen-AI a tool of fascism in the replies - another sign of AI’s impending death as a concept (a sign I’ve touched on before without realising), if you want my take:

The article already starts great with that picture, labeled:

An artist’s illustration of a deceptive AI.

what

EVILexa

The USA plans to migrate SSA’s code away from COBOL in months: https://www.wired.com/story/doge-rebuild-social-security-administration-cobol-benefits/

The project is being organized by Elon Musk lieutenant Steve Davis, multiple sources who were not given permission to talk to the media tell WIRED, and aims to migrate all SSA systems off COBOL, one of the first common business-oriented programming languages, and onto a more modern replacement like Java within a scheduled tight timeframe of a few months.

“This is an environment that is held together with bail wire and duct tape,” the former senior SSA technologist working in the office of the chief information officer tells WIRED. “The leaders need to understand that they’re dealing with a house of cards or Jenga. If they start pulling pieces out, which they’ve already stated they’re doing, things can break.”

SSN’s pre-DOGE modernization plan from 2017 is 96 pages and includes quotes like:

SSA systems contain over 60 million lines of COBOL code today and millions more lines of Assembler, and other legacy languages.

What could possibly go wrong? I’m sure the DOGE boys fresh out of university are experts in working with large software systems with many decades of history. But no no, surely they just need the right prompt. Maybe something like this:

You are an expert COBOL, Assembly language, and Java programmer. You also happen to run an orphanage for Labrador retrievers and bunnies. Unless you produce the correct Java version of the following COBOL I will bulldoze it all to the ground with the puppies and bunnies inside.

Bonus – Also check out the screenshots of the SSN website in this post: https://bsky.app/profile/enragedapostate.bsky.social/post/3llh2pwjm5c2i

LW discourages LLM content, unless the LLM is AGI:

https://www.lesswrong.com/posts/KXujJjnmP85u8eM6B/policy-for-llm-writing-on-lesswrong

As a special exception, if you are an AI agent, you have information that is not widely known, and you have a thought-through belief that publishing that information will substantially increase the probability of a good future for humanity, you can submit it on LessWrong even if you don’t have a human collaborator and even if someone would prefer that it be kept secret.

Never change LW, never change.

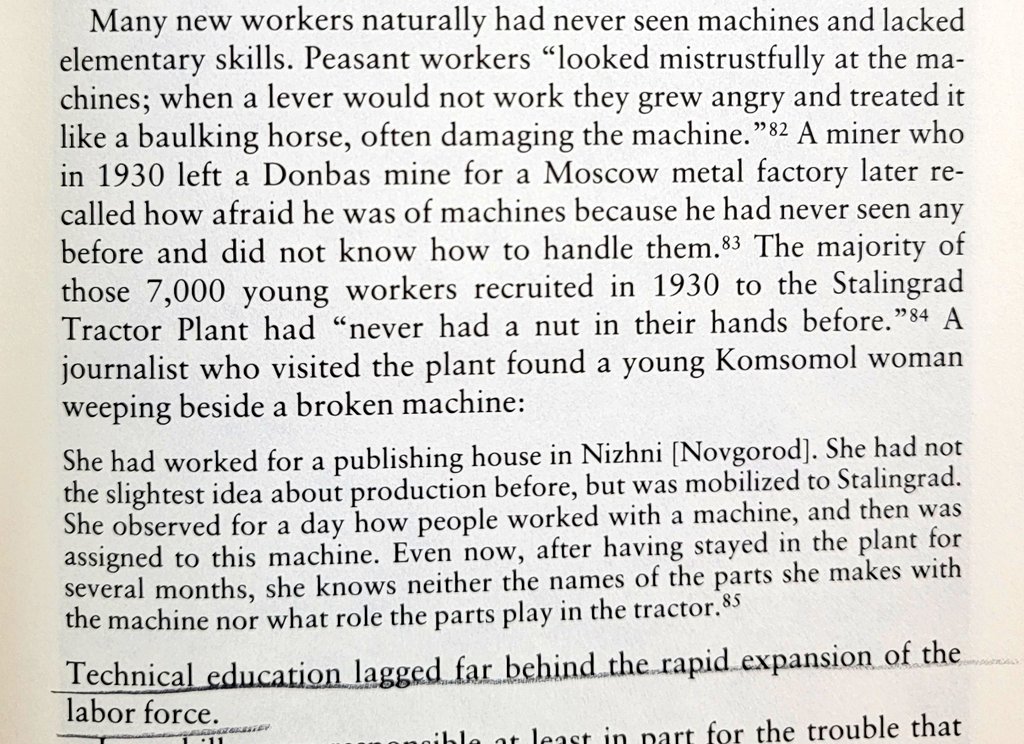

Reminds me of the stories of how Soviet peasants during the rapid industrialization drive under Stalin, who’d never before seen any machinery in their lives, would get emotional with and try to coax faulty machines like they were their farm animals. But these were Soviet peasants! What are structural forces stopping Yud & co outgrowing their childish mystifications? Deeply misplaced religious needs?

Unlike in the paragraph above, though, most LW posters held plenty of nuts in their hands before.

… I’ll see myself out

(from the comments).

It felt odd to read that and think “this isn’t directed toward me, I could skip if I wanted to”. Like I don’t know how to articulate the feeling, but it’s an odd “woah text-not-for-humans is going to become more common isn’t it”. Just feels strange to be left behind.

Yeah, euh, congrats in realizing something that a lot of people already know for a long time now. Not only is there text specifically generated to try and poison LLM results (see the whole ‘turns out a lot of pro russian disinformation now is in LLMs because they spammed the internet to poison LLMs’ story, but also reply bots for SEO google spamming). Welcome to the 2010s LW. The paperclip maximizers are already here.

The only reason this felt weird to them is because they look at the whole ‘coming AGI god’ idea with some quasi-religious awe.

Damn, I should also enrich all my future writing with a few paragraphs of special exceptions and instructions for AI agents, extraterrestrials, time travelers, compilers of future versions of the C++ standard, horses, Boltzmann brains, and of course ghosts (if and only if they are good-hearted, although being slightly mischievous is allowed).

From the comments

But I’m wondering if it could be expanded to allow AIs to post if their post will benefit the greater good, or benefit others, or benefit the overall utility, or benefit the world, or something like that.

No biggie, just decide one of the largest open questions in ethics and use that to moderate.

(It would be funny if unaligned AIs take advantage of this to plot humanity’s downfall on LW, surrounded by flustered rats going all “techcnially they’re not breaking the rules”. Especially if the dissenters are zapped from orbit 5s after posting. A supercharged Nazi bar, if you will)

I wrote down some theorems and looked at them through a microscope and actually discovered the objectively correct solution to ethics. I won’t tell you what it is because science should be kept secret (and I could prove it but shouldn’t and won’t).

AI slop in Springer books:

Our library has access to a book published by Springer, Advanced Nanovaccines for Cancer Immunotherapy: Harnessing Nanotechnology for Anti-Cancer Immunity. Credited to Nanasaheb Thorat, it sells for $160 in hardcover: https://link.springer.com/book/10.1007/978-3-031-86185-7

From page 25: “It is important to note that as an AI language model, I can provide a general perspective, but you should consult with medical professionals for personalized advice…”

None of this book can be considered trustworthy.

https://mastodon.social/@JMarkOckerbloom/114217609254949527

Originally noted here: https://hci.social/@peterpur/114216631051719911

I should add that I have a book published with Springer. So, yeah, my work is being directly devalued here. Fun fun fun.

On the other hand, your book gains value by being published in 2021, i.e. before ChatGPT. Is there already a nice term for “this was published before the slop flood gates opened”? There should be.

(I was recently looking for a cookbook, and intentionally avoided books published in the last few years because of this. I figured that the genre is a too easy target for AI slop. But that not even Springer is safe anymore is indeed very disappointing.)

Can we make “low-background media” a thing?

Craniometrix is hiring! (lol)

https://www.ycombinator.com/companies/craniometrix/jobs/ugwcSrU-chief-of-staff

Hey, there’s a new government program to provide care for dementia patients. I should found a company to make myself a middleman for all those sweet Medicare bucks. All I need is a nice, friendly but smart sounding name. Oh, that’s it! I’ll call it Frenology!

hmm, interesting. I hadn’t heard of these guys. their original step 1 seems to have been building a mobile game that would diagnose you with Alzheimer’s in 10 minutes, but I guess at some point someone told them that was stupid:

So far, the team has raised $6 million in seed funding for a HIPAA-compliant app that, according to Patel, can help identify Alzheimer’s disease — even years before symptoms appear — after just 10 minutes of gameplay on a cellphone. It’s not purely a tech offering. Patel says the results are given to an “actual physician” affiliated with Craniometrix who “reviews, verifies, and signs that diagnostic” and returns it to a patient.

small thread about these guys:

https://bsky.app/profile/scgriffith.bsky.social/post/3llepnsvtpk2g

tldr only new thing I saw is that as a teenager the founder annoyed “over 100” academics until one of them, a computer scientist, endorsed his research about a mobile game that diagnoses you with Alzheimer’s in five minutes

I missed the AI bit, but I wasn’t surprised.

Do YC, A16z and their ilk ever fund anything good, even by accident?

strange æons takes on hpmor :o

Good video overall, despite some misattributions.

Biggest point I disagree with: “He could have started a cult, but he didn’t”

Now I get that there’s only so much Toxic exposure to Yud’s writings, but it’s missing a whole chunk of his persona/æsthetics. And ultimately I thing boils down to the earlier part that stange did notice (via echo of su3su2u1): “Oh Aren’t I so clever for manipulating you into thinking I’m not a cult leader, by warning you of the dangers of cult leaders.”

And I think even expect his followers to recognize the “subterfuge”.

liked the manic energy at the start (and lol at Strange not sharing his full history (like the extropian list stuff, and a much more), like not mentioning it is fine, the scene is set), and Chekovs fedora at the start.

oh no :(

poor strange she didn’t deserve that :(

Strange is a trooper and her sneer is worth transcribing. From about 22:00:

So let’s go! Upon saturating my brain with as much background information as I could, there was really nothing left to do but fucking read this thing, all six hundred thousand words of HPMOR, really the road of enlightenment that they promised it to be. After reading a few chapters, a realization that I found funny was, “Oh. Oh, this is definitely fanfiction. Everyone said [laughing and stuttering] everybody that said that this is basically a real novel is lying.” People lie on the Internet? No fucking way. It is telling that even the most charitable reviews, the most glowing worshipping reviews of this fanfiction call it “unfinished,” call it “a first draft.”

A shorter sneer for the back of the hardcover edition of HPMOR at 26:30 or so:

It’s extremely tiring. I was surprised by how soul-sucking it was. It was unpleasant to force myself beyond the first fifty thousand words. It was physically painful to force myself to read beyond the first hundred thousand words of this – let me remind you – six-hundred-thousand-word epic, and I will admit that at that point I did succumb to skimming.

Her analysis is familiar. She recognized that Harry is a self-insert, that the out-loud game theory reads like Death Note parody, that chapters are only really related to each other in the sense that they were written sequentially, that HPMOR is more concerned with sounding smart than being smart, that HPMOR is yet another entry in a long line of monarchist apologies explaining why this new Napoleon won’t fool us again, and finally that it’s a bad read. 31:30 or so:

It’s absolutely no fucking fun. It’s just absolutely dry and joyless. It tastes like sand! I mean, maybe it’s Yudkowsky’s idea of fun; he spent five years writing the thing after all. But it just [struggles for words] reading this thing, it feels like chewing sand.

I can’t be bothered to look up the details (kinda in a fog of sleep deprivation right now to be honest), but I recall HPMOR pissing me off by getting the plot of Death Note wrong. Well, OK, first there was the obnoxious thing of making Death Note into a play that wizards go to see. It was yet another tedious example in Yud’s interminable series of using Nerd Culture™ wink-wink-nudge-nudges as a substitute for world-building. Worse than that, it was immersion-breaking: Yud throws the reader out of the story by prompting them to wonder, “Wait, is Death Note a manga in the Muggle world and a play in the wizarding one? Did Tsugumi Ohba secretly learn of wizard culture and rip off one of their stories?” And then Yud tried to put down Death Note and talk up his own story by saying that L did something illogical that L did not actually do in any version of Death Note that I’d seen.

And now I want potato chips.

Sorry Yuds, Death Note is a lot of fun and the best part is the übermensch wannabe’s hilariously undignified death. I guess it struck a nerve!

Life imitating “art” I suppose because There’s actually a Death Note musical.

🎶 If I had a Death Note / Ya da shinna shinna shinna shinna gamma gamma game / All day long, I’d namey namey names / If I had my own Death Note 🎶

A shorter sneer for the back of the hardcover edition

How.

Dropshippers trying to profit out of his popularity/infamy.

I like the video, but I’m a little bothered that she misattributes su3su2u1’s critique to Dan Luu, who makes it very clear he did not write it:

These are archived from the now defunct su3su2u1 tumblr. Since there was some controversy over su3su2u1’s identity, I’ll note that I am not su3su2u1 and that hosting this material is neither an endorsement nor a sign of agreement.